Machine: System: LENOVO product: 20349 v: Lenovo Y50-70 Touch I don't think it's necessary for troubleshooting purposes, but here's my machine's data just in case: This is a dual boot machine, so I do have the option of restarting in Windows mode and downloading a program called Similarity, the free version of which works well, but I shouldn't have to do that, should I?Īm I doing something wrong? I've played with the options and haven't gotten any better results. All the way down to 1% and still nothing. I put those files in the ignore list and tried it again-after adjusting the "filter hardness" down from 95% to 85%. I was hoping that DupeGuru would discover them and allow me to choose which ones to keep (based on size, resolution, etc.). They are the exact same photos but different dimensions. I know for a fact that I have many duplicate files (at least 117) because I know that I downloaded them and put them on my external hard drive. The files it found were very similar, but not duplicates.

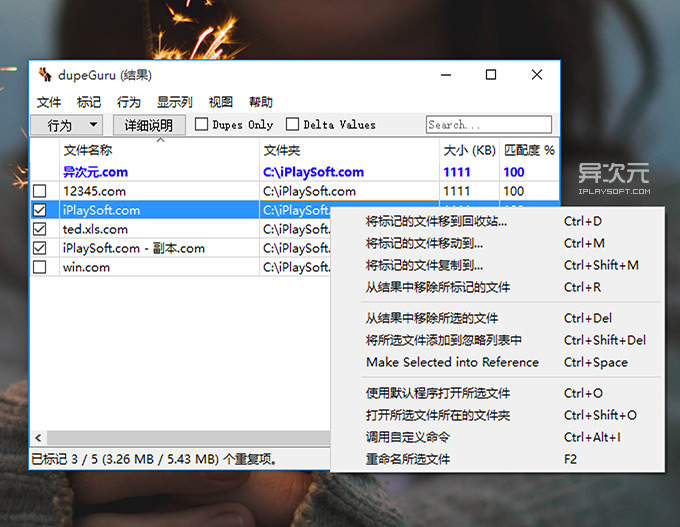

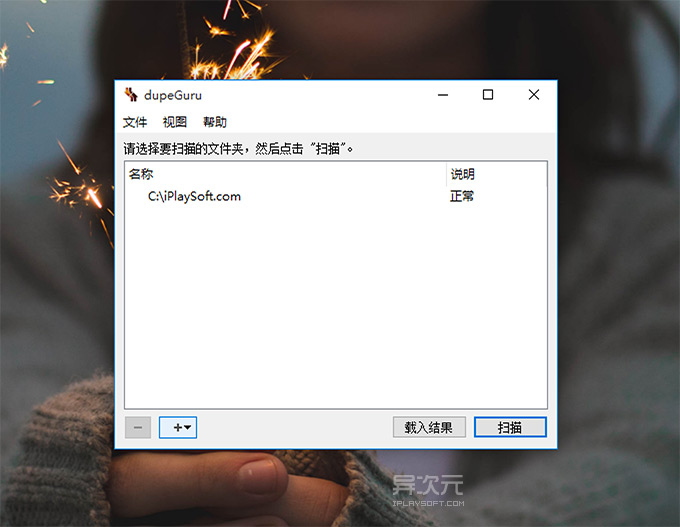

I ran it for the first time last night and it found 4 duplicate files. Now that I’ve cleaned up most of my mess, I realize that instead of having 8 or 900Gb of data like I imagined, reality is closer to 300Gb.I downloaded and installed DupeGuru after reading about how highly regarded it is. I ran it on my well-organized Music folder and discovered 5Gb of duplicate data in there - in less than a minute! Once you’ve found duplicates, you can choose to view only the duplicates, sort them by size or folder, delete, copy or move them.Īs with any duplicate-finder programme, you cannot just use it blindly, but it’s an invaluable assistant in freeing space. That means you tell dupeGuru “stuff in here is good, don’t touch it, but I want to know if I have duplicate copies of that content lying around”. Now, one nice thing about dupeGuru is that you can specify a “reference” folder when you choose where to hunt for duplicates. Easier to clean if everything is in one place! I also used my (now clean) 500Gb to copy some folder structures I knew were clean. The first thing I did was create a directory on my home server and copy all my external hard drives there. Lack of a clear backup strategy leads to massive, uncontrolled and disorganized data redundancy. This prompted me to coin the following law: I quickly realized (no surprise) that I had huge amounts of redundant data. Plus, it’s released as Fairware, which I find a very interesting compensation model: as long as there are uncompensated hours of work on the project, you’re encouraged to contribute to it, and the whole process is visible online.īack to data. (It works on OSX, Windows, and Linux.) It’s been an invaluable assistance in showing me where my huge chunks of redundant data are. Thanks to a kind soul on IRC, I have finally found the de-duping love of my life. I’ve wanted a programme like this for ages, without really taking the time to find it.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed